A How-to-Guide for Market, Brand and Advertising Tracking Studies

The reasons for conducting tracking research and the types commonly used.

Published in May/June 2022 Issue of Quirk’s Marketing Research Review

Brand health, advertising, and market research tracking are still vital to the success of any business, whether it be a fast-food franchise or a utilities provider. If you don’t know what’s happening in your particular sector of the market, how can you plan, strategize and future-proof your or any business?

For marketers, those of you entrusted with generating and acting upon market data, it is critical you have a complete understanding of tracking studies.

To help with this, our how-to-guide for tracking studies will cover the reasons for conducting tracking research and provide detailed descriptions of the principal trackers used. It also covers key metrics, how to control variables, how to analyze data, and, of greatest importance, how to effectively report the findings of any analysis.

While methodologies have evolved and adapted – from landline phones in the old days to online panels today – the desire and need to frequently, if not constantly, monitor a market remains necessary to provide relevant input to the executive dashboard and is the standard by which the performance of marketing teams and agencies are evaluated.

The Need for Tracking Studies is as Prevalent as Ever

So, why conduct a tracking study? Are you concerned about your position in the competitive landscape? Worried about brand equity? Are you about to launch a new ad campaign? Are you planning to introduce a new product or line extension? Have you revised your product formulation? Do you want to test a heavy-spending media plan? Are you going to test the market with a new product?

All of these are valid objectives for conducting some form of market tracking. How you design and conduct a tracking program can differ and these two elements are heavily dependent on the level of commitment, the budget available and the types of decisions to be made.

No Substitute

Dependent variables like trial and repeat are successfully obtained from third-party data like panels and scanner data, but there is no substitute for asking consumers questions that provide us with softer data like attitudes, emotions, and intentions in order to understand the “Whys” around how people ultimately behave. That is where tracking studies really shine.

Biometrics may one day provide non-conscious feedback on a reliable and scalable basis online but for the foreseeable future, we will still be conducting tracking studies in order to find the answers to our important marketing questions.

By the time you have read through this white paper, you should have the answers to the following tracking-related questions:

- How do we go about designing a program that best meets our needs?

- When do we start our tracking program?

- How frequently should we conduct the research?

- When do we stop, if ever?

- Who do we target?

- How do we find them?

- How quickly can we expect to see an ROI on our efforts?

We will be focusing primarily on brand and advertising tracking examples as we continue, but the principles apply whether you are conducting tracking which relates to corporate reputation, brand health, longitudinal studies, or customer experience (Figure 1).

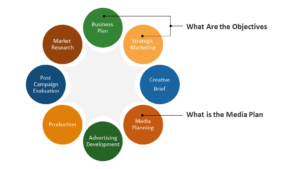

Two Key Components

The answers to the above questions largely depend on two key components of your plan: what the objectives of the research are and, for brand and advertising tracking, what the media and spending plans are.

The media plan is dependent on the objectives of the program. If the plan is to conduct heavy spending in specific markets, then the media plan needs to reflect increased spending for the brand in those markets, relative to a set of control markets. The objectives, therefore, will dictate when, where, and how to conduct your research and how your action standards for success should be created.

The first step is to determine when to conduct your research in an effort to gauge results.

Each type of tracking program listed below can be used to measure the impact of different types of objectives and will dictate the timing for in-market assessment:

- pre-post

- pre-post, test-control;

- pulse

- continuous

- longitudinal

- digital tracking

- social media listening.

Let’s discuss each in some detail.

Pre- and Post-Wave

In almost every situation, you are going to want to conduct pre-wave interviews in order to establish a benchmark for later performance., such as a heavy spending program, a new campaign launch, a new product launch, or just monitoring new competitive activity.

One of the most effective ways to measure the impact of your marketing activities is by including control market(s), which will help to diagnose whether any pre-post bounce is truly due to your marketing efforts or if it is simply a result of the market’s normal course of events.

Pre- and Post-, Test-and-Control

Employing test and control markets depends on several factors including:

- Whether your program is national or regional

- If your media plan includes a ‘heavy up’ component

- Whether or not you can match other markets to the area in which you are tracking. By “matching” markets, you need to consider CDI (Category Development Indices) and BDI (Brand Development Indices) which are similar to the test and control geographies. CDI is applicable if you have just entered that market, or BDI if you already have an established business. Most often, you are targeting high BDI or CDI markets in order to improve the probability of successfully predicting the response of target groups in those markets.

In the case of any advertising tracking study, the media plan becomes an integral part of the timing of the interviewing. This should go without saying, but many times I have seen advertising testing programs where the ad spending for the brand in question was nowhere near what competitors were spending. There is no real chance of identifying differences in awareness, brand perceptions, and hopefully behavior if your brand’s message can’t be heard. You don’t have to outspend your competitors for advertising, but you do have to be able to break through with a minimal spending threshold or you will never be able to read differences in the key metrics from the pre-wave to the post wave, and/or from test markets to control markets.

Pulse vs. Continuous Tracking

Whether or not to “pulse” the reading or conduct a “continuous” read of the market will depend again on the media plan. If spending is relatively consistent after the initial launch of a new campaign, then you are going to want to read the market on an ongoing basis, carrying out a minimum number of interviews on a daily basis.

There are many advantages to continuous tracking, including the flexibility to read the market before, during, and after specific competitive events, PR disasters and/or market crises (e.g., a significant drop in the financial markets or a pandemic.) Additionally, continuous tracking can save you from either totally missing out on a one-time event that might occur the week after you collected data, or from including it in your data, which may only have a minor impact on the market in the longer term. It also allows you to aggregate the data into rolling periods (rolling 4-week, 12-week periods) in order to smooth out the data, which increases the reliability and reduces the risk of obtaining biased results.

Conducting pulse waves is more applicable if your media plan has predictable spikes which render continuous tracking unsuitable. For example, if your plan is to spend heavily each time you launch a new advertising campaign with new executions, or even just a pool-out of an existing campaign for a short duration and then go dark, then there is little benefit to conducting continuous tracking. In this scenario, there will be little impact on key metrics for the duration of the blackout period prior to new spending. Simply time each wave to be conducted shortly after each heavy spending period and then read the results.

However, there is no guarantee you will even see an impact, as the timing of the post wave and the amount of spending can dictate the potential impact on the key metrics that include awareness, familiarity, consideration, usage, and brand perceptions. Of course, this assumes your advertising is effective in that it successfully drives awareness and is successfully able to motivate the target to want to purchase the product or service. (Pre-testing is critical before a launch and the subject of a future white paper on Copy Testing.)

Suffice it to say that you need to spend enough on advertising to break through the clutter and for a sufficiently lengthy period of time for your audience to take notice. There is a ton of evidence to show that there is a lead-lag effect in advertising, so truncating your post-wave tracking can wind up with you totally missing the impact that you were hoping for. Again, timing is critical.

Longitudinal Tracking Studies

Longitudinal tracking collects data from the same respondents over time. This type of data can be very important in tracking trends and changes over time by asking the same people questions in multiple waves. It is particularly helpful if you are trying to determine if a targeted group of consumers is either adopting the attitudes and behaviors of the next age cohort they are moving into or if they are taking their attitudes and behaviors with them. Think about how the Gen Z segment might change as they move into the cohort currently inhabited by Millennials.

This is obviously a time-consuming and expensive proposition and is more commonly conducted and funded by large government programs, but it does have its place in the system of tracking programs.

Longitudinal tracking can also be applied to programs where you are looking to assess if a particular group is adopting new learning or is influenced by messages that are meant to effect change. Think about campaigns that might be designed to positively influence how a group of physicians view new treatments for specific disease states. A pharmaceutical company could conduct a longitudinal assessment of the same group of physicians, which may be limited in size. They may have been detailed over time and/or received specific articles or other marketing communication that were designed to inform and influence behavior. When the universe is small, it often helps to reach out to this same target group on multiple occasions for the sake of efficiency. When the universe is large, there is little reason to reuse samples as these may often lead to biased results.

And this brings up the important point about the potential dangers of conducting longitudinal tracking programs. It is quite possible that the act of conducting a longitudinal study, among the same sample, can serve to educate that specific population regarding the topic and can bias future waves. Respondents can take it upon themselves to further educate themselves and respond as others might, as opposed to reflecting their personal attitudes and behaviors.

Over time, participants may cease to take part in a longitudinal study. This is known as attrition. Attrition can result from a range of factors, some of which are unavoidable, while others can be reduced by careful study design or practice. Attrition results in a loss of sample size, that in turn, impacts sample reliability and the potential to extrapolate results to a larger universe.

By definition, the value of longitudinal studies builds up gradually over time. However, this means that researchers need to wait for more time to pass before they can answer some key research questions.

Additionally, some of the original questions could appear out of date while the environment and social landscape may have changed. Similarly, the design itself will be a function of what are considered “best practices” at the time of the initial set-up of the program. As referenced earlier, the evolution of tracking studies has changed dramatically over the past 20+ years. What makes sense to implement now, may seem out of date two years from now.

Digital Tracking Studies

Digital tracking is a technique that has only become prevalent within the past ten years along with the advancement of digital advertising and digital tracking tools. Digital tracking can either be conducted in isolation or, more effectively, in concert with survey research tracking.

Access to survey data about what consumers think and their stated intent is essential for marketing, product development, and more. However, while these insights are central to understanding consumers, there is a massive opportunity for observed data to complete the picture. It is much easier to understand consumers when you combine what they told you they would do, with what they actually did. Advances in digital tracking now enable marketers to accurately gauge consumer behavior and couple those insights with needs and intent to bring the digital consumer profile into sharp focus.

Digital tracking is crucial to understanding whether or not your target audience has actually had the opportunity to view your messages online, and then track their journey and ‘path to purchase’. What the audience is being exposed to (passively or intentionally) is instrumental in determining whether your messages are properly targeting the right people. The additional research conducted, establishing what media they are engaging with, what sites they visit, and whether or not they make a purchase can all be accurately established through digital tracking. Ultimately, you would like to determine how you can positively affect behavior as consumers make their journeys on the path to purchase.

Work we carried out from 2010 – 2014 clearly indicated that consumers exposed to both digital ads and on-air advertising were much more likely to be influenced than those who were only exposed to one of them. It may seem obvious, but we were able to actually identify the level of influence each type of ad had. We accomplished this by conducting simultaneous surveying and digital tracking among the same set of respondents. This is why it is important to consider both approaches when designing a program to track the market.

Social Media Listening

This can all be combined with social media ‘listening programs’ to better understand how consumers are talking about your products/categories, determining the types of segments that exist, and key search terms that will effectively reach your target. There are several tools that exist to assist in collecting this data, some free, but most are paid-for services.

In the past, telephone survey research was the prevalent method of conducting any tracking study. As cell phones became ubiquitous, respondents were no longer willing to engage in 50-minute surveys. Call screening, homes with no landlines and the arrival of caller ID further restricted response levels from individuals that were primarily targeted during and after dinner time.

Conducting surveys online became more efficient and led to better representation of targeted markets. What didn’t change, for a long time, was the assumption that you could simply apply the same techniques that were used on telephone surveys to online surveys. Surveys remained too long, rating scales were not updated to reflect the online experience, items within rating questions stayed way too wordy and the way we collected and analyzed open-end textual data did not change.

As a result, practices that had been used during the days of telephone interviewing became even more prevalent with online surveys. Respondents were not qualified to take part in the specific survey, they sped through the survey, they straight-lined their answers and did some research of their own before answering open-ended questions in order to fit the screening criteria.

There are only a finite number of potential respondents that are empaneled by sample providers, all providing incentives to complete surveys. The problem was exacerbated when the market research industry realized that many of the same respondents were being used repeatedly by all the different sample providers. Sample companies and research agencies have been working hard to reduce fraud by placing tests within the analyses of survey-generated data to identify and eliminate cheaters and to reduce the number of multiple respondents that originate from the same IP addresses. While cooperation rates continue declining, the quality of the data obtained has increased. Unfortunately, with fewer quality samples available, particularly for B2B research, costs per respondent have increased.

So the current line of thinking mandates a number of dramatic changes to the surveys themselves, including:

• limiting survey duration to no more than 20 minutes if possible;

• trimming attribute lists to no more than 12;

• reducing the number of brands rated at any one time;

• making survey mobile-ready (limit the number of attributes per screen; shorten rating scales; fi t the screen to the device);

• eliminate redundancy (no reason to ask stated importance if it can be derived);

• reduce the number of dependent variables (overall satisfaction, likelihood to recommend, consideration, share of wallet, constant sum, etc. – pick one!);

• eliminate irrelevant questions;

• eliminate questions you already know the answers to;

• avoid “questionnaire by committee” syndrome.

Base design on the objectives

What are the key metrics for an effective tracking study? You can’t design any questionnaire unless you base its design on the objectives of the research. For an advertising tracking study, you need to go back to the strategy that was created for the development of new advertising (the copy strategy) as well as the marketing and media plans. What is the copy trying to accomplish? Is it directed at new users or prospects or is it designed to reassure your loyal user base? Think hard about what you

are trying to accomplish and design the survey accordingly.

Figure 1 shows a way to conceptualize the types of questions you would want to include in the survey as you view the marketing funnel overall. That said, there is a standard set of questions that should be included in any tracking study and asked of everyone who passes the screening criteria. These questions should relate to the following:

• brand or advertising awareness

• familiarity (knowing the brand name alone is not enough to know the brand)

• brand experience – current portfolio of products used or purchased in the past (e.g., currently, most often, past year, past three months)

• consideration – what brands are in contention for selection?

• brand ratings – limit the scale, limit the number of brands rated and limit the number of factors they are rated on (respondents should be aware of and familiar with the brands that are being rated)

• loyalty metric – e.g., satisfaction, likelihood to recommend, share of wallet

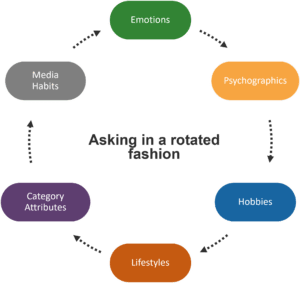

All other question areas, except for the demographic profiling section, can be covered in a rotated fashion so that not everyone is asked every question. This will help to limit the length of each questionnaire. The answers to these question areas are typically lower priority and a smaller sample for analysis is often adequate.

As an example (Figure 2), one-third of the sample could be asked two of these modules each, so that all modules are asked among the same number of respondents: emotional assessment; category attitudes; lifestyles; psychographics; hobbies; media habits. So, one-third emotions and psychographics, one-third hobbies and lifestyles and one-third category attitudes and media habits, etc.

Constructing a tracking study

When constructing a tracking study, the first step is deciding where you are going to source your sample of those

potentially in your target market. Although some panel companies screen their panelists for preexisting or former brand usage or certain characteristics or behaviors, this almost never aligns with what you’re trying to accomplish. And even if there are pre-screened panelists, there is no guarantee the data is current. It’s important to work with a trusted panel partner who can not only complete the initial project but also be available for future ones. This avoids the introduction of an unwanted variable.

Samples of tracking studies are usually stratified using a few key characteristics that are relevant for the market(s) being investigated:

• Region – Is it national or regional or specific test and control markets?

• Age – Do we need to survey everyone 18+ in age or are there defined targets like Gen Z and/or Millennials which will require stricter definitions?

• Gender – Male and/or female? 50/50 split or skewed in any fashion? Remember that today’s gender roles are not as rigidly defined as male or female now that non-binary is an option.

• Income – For many categories, household income is a key criterion for a potential market target which may require a more affluent sample

Once completed, these strata are often weighted to how they are represented in the larger universe and often balanced to previous waves of tracking to be “more representative” and to minimize bias between waves. As an example, when you design a tracking program, you might specify that out of a sample of 1,000 completed interviews, you will require the sample to contain males and females, aged 18-54 and to have household incomes above $50,000.

In order to manage the number of completed interviews and to maintain a representative sample, you are likely to require that the interviews have quotas enforced such that:

• Half are among males and half among females.

• The age groups are divided such that there are equal numbers of completed interviews in each of the following subgroups: 18-24; 25-34; 35-44; 45-54.

• Similarly, household income might be divided into the following groups: $50,000-$59,000; $60,000-$69,000; $70,000-$79,000; $80,000-$89,000; $90,000-$99,000; $100,000+

• Geography is usually divided by time zone or the four Census regions and the nine divisions within them.

The point in going through this level of rigor is to make sure you can compare your sampled population to the known

Census data and to weight your sample back to those known data points to ensure representation of the U.S. population that are between the ages of 18 and 54. As each of these points of stratification are taken into account, the weighting scheme becomes more complex as they are all interrelated. So if you were to conduct a tracking study among people aged 18-54, you can be certain that whatever data you collect can be projected onto the larger population, e.g., among adults up to 54 years of age, 23% of the population in the U.S. are aware of the Acme brand of car polish.

Timing is key

One of the most important variables you need to control is timing of the research. Of course, if you are conducting a continuous study, then consistency is the only concern. If research is being used to trend from previous waves, it is critical to replicate the timing of the previous reading if possible. If the post-wave was conducted as soon as the media spending stopped then you need to

continue with that trend. Again, this is not really an issue for continuous tracking but important for point-in time tracking programs.

If this is not a continuous tracking program, you need to decide how long you intend the post wave to continue. Usually, you want to allow for a lead-lag effect to take place, which means you don’t want to complete the cycle of interviewing too soon. However, how long you want it to continue can be difficult to assess, which highlights an additional benefit of continuous tracking.

Skew the outcome

Changes to any tracking study can skew the outcome, making it ineffective to compare results to those of previous waves. Whenever we see major changes in the trend of data from a tracking study that has been either been changed in its design or taken over from another company, the immediate questions are: What changed? Did the market register a change or did the results change because the study changed?

Potentially variable factors can include any or all of the following: the company managing the research; the method of execution; the sample source; changing the sample; changing the sampling geography; changes to the study’s timing; changes in survey flow or the addition/deletion of key question areas; changes to sample composition; programming errors; data tabulation errors; changing the brands being assessed; changing key characteristics of an attribute list;

changing key evaluative criteria and/or rating scales. Assuming you want to maintain historical trends where possible, the

following is the approach we would take to minimize the variables that could potentially impact those trends:

• Utilize the same sample source/panel provider.

• Maintain as much consistency between the previous survey as possible, particularly the key metrics and the order in which they were obtained.

• Maintain sampling geography.

• Keep the sample composition consistent.

• Maintain survey frequency wherever possible.

All or even most of the above list may not be totally possible to achieve.

However, you can maintain consistency by conducting a bridge wave, which is a method of comparing the impact of variables by conducting a tracking study while simultaneously using two different survey vehicles and then

comparing the results. Comparing historical trends between the two versions can help determine how any of

the variables that changed may have impacted any trends. By understanding the correlation between the two versions and the historical trends, you can develop models for calibrating the data going forward.

Dependent on the objectives

The processes of analyzing marketing related data and reporting any insights are intertwined with the design of the research and the creation of the survey, all of which are highly dependent on the objectives of the research. What are you trying to accomplish by conducting a tracking program? To measure the effectiveness of the ad campaign after launch or is it more to assess brand health and to identify threats and opportunities? Maybe it is to read the impact of a heavy-up spending plan? None of the work that needs to be done can be divorced from tying everything back to the objectives. How you organize the data into logical time frames for evaluation and how you weight the sample and balance it to previous waves of data is all contingent on the objectives of the program. How do you start the process? Go back to the objectives of the research.

Let’s assume you are conducting a continuous tracking program to assess in-market ad effectiveness. What does “ad effectiveness” actually mean? Assuming there was appropriate pre-testing conducted on the advertising executions that successfully achieved its objectives based on the copy strategy, you would be hoping to support learning that would suggest that your in-market advertising was both memorable and persuasive. And what about the media plan? Is the ad budget sufficient to break through the clutter of competitive activity to make sure your message is even heard? How do you know it is enough? Have you compared the media plan to competitors’ spending? One of the problems we typically run into is that competitive spending data isn’t available. Further, there is no guarantee that the media spending plan that your ad agency creates will actually be executed as intended. It can take months to obtain in-market spending levels, so you are essentially assuming that the media plan was met and holding your breath that you have spent enough to break through the clutter of competitive spending. How much do you need to spend to be heard and how much spending is enough before you start to see signs of ad wear-out? Wear-out is a relative term. One version defines it as the point where the mix of creative, media placement and spending stops achieving a campaign’s communications objectives and generating a response or consumer interest. The first exposure to a commercial/ad is the most effective. Repeated exposures ultimately lead to diminishing returns. Advertisers need to understand how exposure frequency influences consumer behavior.

In previous work we conducted in the early 2000s we often referred to internal research that found you needed to spend somewhere in the area of 700-1,000 targeted rating points in order for a single ad to run its course before it needed to be replaced with a pool-out. Think about the time frame for analysis in combination with the time frame of your tracking. Unless you are conducting a continuous tracking program, you may not be in a position to organize your results by a time frame that is consistent with your media plan to be able to see the impact of any potential wear-out until it is too late. This is also why you need to overlay he spending levels along with your trended data so you can observe the relationship between the pattern of spending and its impact on key metrics like awareness, persuasion and imagery. Brand perceptions or imagery, however, do take longer to deteriorate as they tend to follow a lead-lag pattern of decay.

All of this trended data can help you to build forecasting models so that you can predict, within a certain level of certainty, how spending levels are likely to impact key metrics like awareness. And, if you can obtain in-market performance from the client,

you can validate your tracking metrics as indicators of market performance. Imagine the scenario where you can link pretesting metrics, survey tracking data, digital tracking data, spending levels and in-market trial, repeat and share to create a holistic view of pre-market, in-market and post-advertising spending market performance. The above scenario is uncommon, so a typical analysis is a key driver analysis where dependent variables like brand ratings are correlated with any number of dependent variables like satisfaction, most-often usage, repeat, recommend, etc. Identifying which of the many brand attributes are driving positive attitudes and hopefully behaviors can help refine the copy strategy going forward. Other analyses that prove helpful are perceptual maps that visually display the relationship between brands and the images that define them.

Overlaying the key driver analysis can identify important areas that are either not being delivered on or are weaknesses for your brand. Overall, the key to an impactful report is to think about it as telling a story rather than reporting the facts. There are few things in research that are more ineffective than a 100-page tracking report that lists all the data in excruciating detail. Rather, go back to the objectives and determine what is important to report and what is secondary. Create the “red thread” that weaves its way through the report, telling the story of what the team needs to know about its brand and the market in which it competes.

Learn further

There are so many areas to consider when planning, executing, analyzing and reporting a tracking study. While we have covered several of them here, we invite you to challenge us with your needs for implementing a program and learn further with us how to

make the process more effective.

Unlike many of my colleagues at TRC, I never really wanted to be in market research. My dream from an early age was to be either a locomotive conductor, a Zamboni driver or the guy at the amusement park that starts up the roller coaster. I went to college for chemistry, but quickly realized that I didn’t want to be a chemist. So I went to Business School where my goals shifted to either being a Brand Manager for a CPG company, or a Madison Avenue Mad Man, complete with big expense accounts and three martini lunches. Truthfully, they would have to pour me into a bucket if I ever consumed three martinis.

Unlike many of my colleagues at TRC, I never really wanted to be in market research. My dream from an early age was to be either a locomotive conductor, a Zamboni driver or the guy at the amusement park that starts up the roller coaster. I went to college for chemistry, but quickly realized that I didn’t want to be a chemist. So I went to Business School where my goals shifted to either being a Brand Manager for a CPG company, or a Madison Avenue Mad Man, complete with big expense accounts and three martini lunches. Truthfully, they would have to pour me into a bucket if I ever consumed three martinis.